I am perplexed over the data in which my meter is reading. I am using a variac which is variable from 0 -280 VAC. I am using two digital multimeters in a circuit. One to read voltage ( in parrallel - this meter is a Fluke 21 which has a 300mA internal fuse) and the other to read current ( in series - this meter is the Fluke 177 True RMS Meter which has a 10A internal fuse). Using my variac I apply 3 voltages 108VAC, 120VAC and 132VAC. My current readings and calculated power are as follows: 108VAC - 1.15A - 124.2W, 120VAC - 1.22A - 146.4, 132VAC - 1.28VAC - 168.9W. The only load in my circuit is a 150W Halogen Lamp. What I am perplexed over is my power calculations given Ohm's Law Theory. When voltage is increased, current should decrease, right? Please help, what am I doing wrong?

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Calculating power with Fluke 177 True RMS Meter

- Thread starter pimentelr

- Start date

- Status

- Not open for further replies.

- Location

- Connecticut

- Occupation

- Engineer

By ohm's law, current would decrease on an increase in voltage IF you had constant power (P=VI.)

But you don't have constant power, you have a constant resistance (in a simplified sense.)

By Ohm's law V=IR, an increase in voltage would give a proportional increase in current for a constant resistance.

But you don't have constant power, you have a constant resistance (in a simplified sense.)

By Ohm's law V=IR, an increase in voltage would give a proportional increase in current for a constant resistance.

GeorgeB

ElectroHydraulics engineer (retired)

- Location

- Greenville SC

- Occupation

- Retired

And in addition, the product of RMS amps and RMS volts does not equal watts, it is still volt-amperes. As an example, get yourself a power factor correcting capacitor and hook it up ... current with very little power.By ohm's law, current would decrease on an increase in voltage IF you had constant power (P=VI.)

But you don't have constant power, you have a constant resistance (in a simplified sense.)

By Ohm's law V=IR, an increase in voltage would give a proportional increase in current for a constant resistance.

Thank You

Thank You

David,

Thank You for your explaination to my original question. I now understand how the power is calculated when comparing the 150 Watt lamp to a contant resistance. It has been some time since I was in apprenticeship school and needed a little refresher. Please elaborate more on RMS, in correlation to Volt-Amperes and Watts. Thank You

Here are a couple of real world examples which I am working on:

1. Can I use an RMS Multimeter to measure voltage and current and calculate power in a simple sense? Is it possible to use a formula to convert my Volt-Amperes into Power(Watts) or do I need a non-RMS meter ( I assume these are rare).

2. Will an RMS mutimeter produce erroneous current reading when measuring in-series with a complex circuit board which may have transformers, diodes, rectifiers, capacitors, etc...?

Your knowledge and help is greatly appreciated. Thank You

Thank You

David,

Thank You for your explaination to my original question. I now understand how the power is calculated when comparing the 150 Watt lamp to a contant resistance. It has been some time since I was in apprenticeship school and needed a little refresher. Please elaborate more on RMS, in correlation to Volt-Amperes and Watts. Thank You

Here are a couple of real world examples which I am working on:

1. Can I use an RMS Multimeter to measure voltage and current and calculate power in a simple sense? Is it possible to use a formula to convert my Volt-Amperes into Power(Watts) or do I need a non-RMS meter ( I assume these are rare).

2. Will an RMS mutimeter produce erroneous current reading when measuring in-series with a complex circuit board which may have transformers, diodes, rectifiers, capacitors, etc...?

Your knowledge and help is greatly appreciated. Thank You

The only load in my circuit is a 150W Halogen Lamp. What I am perplexed over is my power calculations given Ohm's Law Theory. When voltage is increased, current should decrease, right? Please help, what am I doing wrong?

That is an oversimplification. Your light bulb is a resistive load. With a resistive load the amperage will vary with the voltage. When you decrease the voltage, the amperage will also decrease. The wattage will also decrease and the amount of light from the bulb will also decrease. This is called a linear load, I believe. When the voltage is increased the light from the bulb will also incerease and the amperage will increase (of course the life of the bulb will be decreased also). In a motor circuit, the amperage will change inversly to the voltage as you expected. The amperage will go up when the voltage goes down because the motor is trying to develop the same amount of power.

If you ever install baseboard electric heat (resistive load) you will notice that it is often dual rated: for example a unit may be rated 1500 watts at 240 volts and 1200 watts at 208 volts.

Hope that helps...

gar

Senior Member

- Location

- Ann Arbor, Michigan

- Occupation

- EE

100720-1438 EST

pimentelr:

For a resistive load, a sine wave voltage sources, and stationary conditions (in this case meaning steady state) RMS voltage * RMS current does equal power. Also true if the voltage is DC. Assuming RMS is measured over an integral number of cycles or the averaging time to measure RMS is long compared to the cycle period.

Were you to have a constant resistance load as you increased the voltage, then power would increase as the square of the votage ratio.

Your measurements do not agree with the square of the voltage. The reason is that a tugsten filment lamp is not a constant resistance. Calculate the resistance for each of your three measurements. Also measurement the resistance of the bulb with your meter after it has fully cooled. Next take it into bright sunlight and again with your meter read its resistance.

You could use the bulb as a therometer. Maybe better to classify it as a radiometer.

Also you can get an approximate measure of the hot filament temperature by its change in resistance from some known temperature.

.

pimentelr:

For a resistive load, a sine wave voltage sources, and stationary conditions (in this case meaning steady state) RMS voltage * RMS current does equal power. Also true if the voltage is DC. Assuming RMS is measured over an integral number of cycles or the averaging time to measure RMS is long compared to the cycle period.

Were you to have a constant resistance load as you increased the voltage, then power would increase as the square of the votage ratio.

Your measurements do not agree with the square of the voltage. The reason is that a tugsten filment lamp is not a constant resistance. Calculate the resistance for each of your three measurements. Also measurement the resistance of the bulb with your meter after it has fully cooled. Next take it into bright sunlight and again with your meter read its resistance.

You could use the bulb as a therometer. Maybe better to classify it as a radiometer.

Also you can get an approximate measure of the hot filament temperature by its change in resistance from some known temperature.

.

gar

Senior Member

- Location

- Ann Arbor, Michigan

- Occupation

- EE

100720-1545 EST

Power dissipation in an electrical circuit was studied by Joule and he related power to current and resistance. This is named Joule's first law. This is to be distinguished from Ohm's law that is the relationship of current to voltage and resistance.

See the following for reference:

http://en.wikipedia.org/wiki/Joule's_laws

.

Power dissipation in an electrical circuit was studied by Joule and he related power to current and resistance. This is named Joule's first law. This is to be distinguished from Ohm's law that is the relationship of current to voltage and resistance.

See the following for reference:

http://en.wikipedia.org/wiki/Joule's_laws

.

SG-1

Senior Member

- Location

- Ware Shoals, South Carolina

To illustrate gar's point about the filment resistance changing with temperature here is a wave form showing a 24W incandesent light bulb. Current is blue & voltage is red.

dbuckley

Senior Member

- Location

- Canterbury, New Zealand

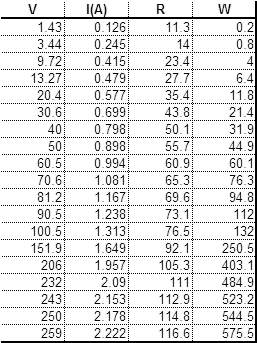

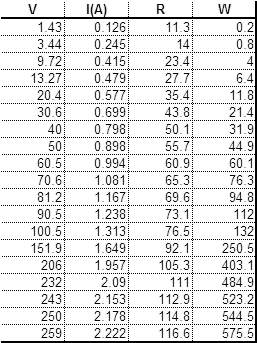

A while ago I did exactly the same thing, with a 500W 240V linear halogen tube...

gar

Senior Member

- Location

- Ann Arbor, Michigan

- Occupation

- EE

100721-1028 EST

pimentelr:

For a definition of Ohm's law see http://en.wikipedia.org/wiki/Ohm's_law . Read the first paragraph, then "Circuit Analysis", and last at the end "History".

The discussion at http://www.the12volt.com/ohm/ohmslaw.asp is wrong to describe power as part of Ohm's law.

Ohm thru experiment discovered that current was proportional to voltage thru a fixed resistor, and inversely proportional to resistance. Ohm did not do power or energy measurements in the creation of Ohm's law.

If you combine Ohm's and Joule's laws, then you obtain various equations for power, such as, P = I^2*R .

There is no good reason to muddy the waters with respect to what is the definition of Ohm's law. Having terms that have clear cut definitions is important to clear communication.

.

pimentelr:

For a definition of Ohm's law see http://en.wikipedia.org/wiki/Ohm's_law . Read the first paragraph, then "Circuit Analysis", and last at the end "History".

The discussion at http://www.the12volt.com/ohm/ohmslaw.asp is wrong to describe power as part of Ohm's law.

Ohm thru experiment discovered that current was proportional to voltage thru a fixed resistor, and inversely proportional to resistance. Ohm did not do power or energy measurements in the creation of Ohm's law.

If you combine Ohm's and Joule's laws, then you obtain various equations for power, such as, P = I^2*R .

There is no good reason to muddy the waters with respect to what is the definition of Ohm's law. Having terms that have clear cut definitions is important to clear communication.

.

- Status

- Not open for further replies.