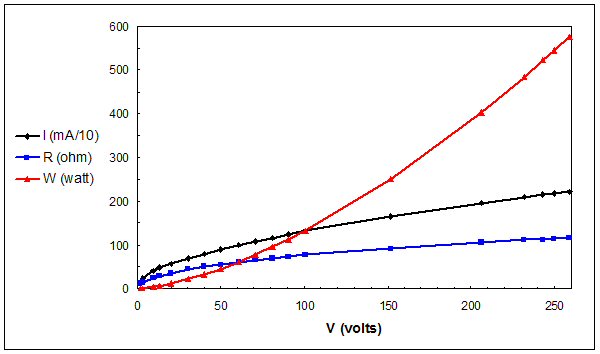

In most cases I typicall deal with raising the voltage for a particular load will reduce the current draw, and vise versa. I have always understood this to be due to the fact that the load kW or power stayed the same and just the voltage and current were changing to adjust to this load power. I have always understood this to be the case for inductive loads such as motors and transformers.

With resistive loads however I am having a hard time understanding this due to the fact that Ohms law does not agree. If I have a resistive load such as a water heater, or oven and I raise the voltage per say, I would expect the current to increase in proportion with the voltage based upon V=IR. So to use an example of a resistive load and saying that the with keeping the kW or Power the same and changing the voltge would lead to a decrease in current, would not agree with ohms law. Can someone help explain?

Thanks

With resistive loads however I am having a hard time understanding this due to the fact that Ohms law does not agree. If I have a resistive load such as a water heater, or oven and I raise the voltage per say, I would expect the current to increase in proportion with the voltage based upon V=IR. So to use an example of a resistive load and saying that the with keeping the kW or Power the same and changing the voltge would lead to a decrease in current, would not agree with ohms law. Can someone help explain?

Thanks